Concepts are building blocks of our semantic memory (that is, our knowledge about the world), and underly all higher-level cognitive processes (K. O. Solomon et al., 1999). They have numerous functions, such as inference, reasoning and others.

One of the best studied functions is identifying whether an entity belongs to a certain category: process called categorisation.

In this post I will discuss several theories of how concepts are organised in our brain, and look at the different approaches to categorisation process.

One of the best studied functions is identifying whether an entity belongs to a certain category: process called categorisation.

In this post I will discuss several theories of how concepts are organised in our brain, and look at the different approaches to categorisation process.

Network models

Our semantic memory is highly organised and structured; this was shown by numerous studies involving, for example, such methods as semantic priming. It is how it is structured in our brain which is really the question. one of the oldest ideas in this respect is the idea of hierarchical organisation. Rosch et al. (1976) defined them as follows:

1. Superordinate categories (e.g. furniture)

2. Basic-level categories (e.g. chair)

3. Subordinate categories (e.g. easy chair)

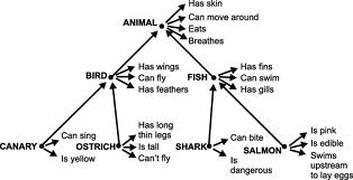

The first model of semantic memory was based on such hierarchical structure, and was suggested by Collins and Quillian (1969). The concepts are represented as nodes (e.g. animal), and each concept has properties/features (e.g. has skin); see below.

1. Superordinate categories (e.g. furniture)

2. Basic-level categories (e.g. chair)

3. Subordinate categories (e.g. easy chair)

The first model of semantic memory was based on such hierarchical structure, and was suggested by Collins and Quillian (1969). The concepts are represented as nodes (e.g. animal), and each concept has properties/features (e.g. has skin); see below.

Notice that property 'can swim' is stored at the 'fish' level only, and is not associated directly with 'shark' or 'salmon'. The assumed principle here is cognitive economy; features are stored as high up as possible to minimise the amount of stored information. To check this, Collins and Quillian conducted a study in which participants had to decide whether a sentence is true or not. Indeed, it took less time to process sentences if subject and property were at the same level (e.g. 'Ostrich can't fly') than when they were separated by levels in a hierarchy (e.g. 'Ostrich has feathers').

The model was proven to have several limitations. First one is linked to the idea of familiarity; we rarely encounter such sentences as 'ostrich has feathers' or 'salmon can swim' in our life - while we probably have heard 'ostrich can't fly' and 'salmon is pink' before. Conrad (1972) found that when familiarity was accounted for, the reaction time was not significantly different for the two type of sentences.

Another problem arose when it was found that sentences such as 'ostrich is a bird' take more time to process than 'canary is a bird', while, according to Collins model, they should take the same amount of time to verify. Such finding gave rise to the idea of typicality which will be discussed further.

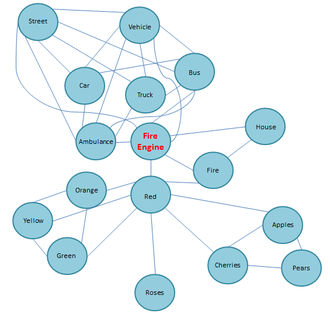

Collins and Loftus (1975) put forward another model, based on their Spreading Activation theory. According to it, logically organised hierarchies were too inflexible. They suggested that semantic memory was organised on the basis of semantic relatedness, for which they checked by asking people how closely they thought related pairs of words were. For example, 'red' is more closely related to 'oranges' than 'roses'.

The model was proven to have several limitations. First one is linked to the idea of familiarity; we rarely encounter such sentences as 'ostrich has feathers' or 'salmon can swim' in our life - while we probably have heard 'ostrich can't fly' and 'salmon is pink' before. Conrad (1972) found that when familiarity was accounted for, the reaction time was not significantly different for the two type of sentences.

Another problem arose when it was found that sentences such as 'ostrich is a bird' take more time to process than 'canary is a bird', while, according to Collins model, they should take the same amount of time to verify. Such finding gave rise to the idea of typicality which will be discussed further.

Collins and Loftus (1975) put forward another model, based on their Spreading Activation theory. According to it, logically organised hierarchies were too inflexible. They suggested that semantic memory was organised on the basis of semantic relatedness, for which they checked by asking people how closely they thought related pairs of words were. For example, 'red' is more closely related to 'oranges' than 'roses'.

According to the model, each time we hear a word or encounter some entity, the appropriate node is activated and spreads activation to related concepts: the closer they are, the stronger they are activated. This theory is much more flexible - however, it is difficult to assess its overall adequacy as it does not make very precise predictions; different people may judge different pairs of concepts to be more/less related.

Other theories of concept organisation

Some other theories of how the knowledge of concepts is organised in our brain include perceptual-functional theory and multiple-property approach. I will briefly outline their main ideas before moving on to the issue of categorisation process.

1. Perceptual-functional theory

The idea was first put forward by Warrington and Shallice (1984). According to the theory, perceptual information (how an object looks, sounds etc.) and functional information (what it is used for) are stored in different parts of the brain. It is assumed that our knowledge of 'living' things is mostly based on perceptual information, while about 'non-living' - on functional information; another assumption is that much more perceptual than functional information is stored in our brain.

Indeed, many brain-damaged patients have problems with processing specific categories of objects. Martin and Caramazza (2003) reviewed such cases; more than 100 patients have been studied who had category-specific deficit identifying living things but not non-living, and 25 patients with opposite pattern.

Some neuroimaging studies also found evidence for the theory. Lee et al. (2002) found that when healthy patients were asked to retrieve perceptual vs. functional information about living or non-living things, left posterior temporal lobe regions were active during processing of perceptual information, and left posterior inferior temporal lobe regions - for functional information. However, it did not matter which type of concept was processed: living or non-living.

2. Multiple-property approach

Perceptual-functional theory has several limitations. For once, it does seem a bit oversimplified. Many properties of living things are not perceptual, however can't be called functional neither (e.g. lives in an ocean etc.).

Multiple-functional theory recognises that most concepts consist of several properties. It suggests the division of functional features into entity behaviour (what an object does) and functional information (what we can do with an object). Perceptual information is also divided into visual, auditory, tactile and taste. Cree and McRae (2003) identified seven different patterns of category-specific deficits following brain damage - instead of only 2, 'living' vs. non-living'. This approach is consistent with brain-imaging findings that different concept properties are stored in different parts of the brain (e.g. Martin & Chao, 2001).

1. Perceptual-functional theory

The idea was first put forward by Warrington and Shallice (1984). According to the theory, perceptual information (how an object looks, sounds etc.) and functional information (what it is used for) are stored in different parts of the brain. It is assumed that our knowledge of 'living' things is mostly based on perceptual information, while about 'non-living' - on functional information; another assumption is that much more perceptual than functional information is stored in our brain.

Indeed, many brain-damaged patients have problems with processing specific categories of objects. Martin and Caramazza (2003) reviewed such cases; more than 100 patients have been studied who had category-specific deficit identifying living things but not non-living, and 25 patients with opposite pattern.

Some neuroimaging studies also found evidence for the theory. Lee et al. (2002) found that when healthy patients were asked to retrieve perceptual vs. functional information about living or non-living things, left posterior temporal lobe regions were active during processing of perceptual information, and left posterior inferior temporal lobe regions - for functional information. However, it did not matter which type of concept was processed: living or non-living.

2. Multiple-property approach

Perceptual-functional theory has several limitations. For once, it does seem a bit oversimplified. Many properties of living things are not perceptual, however can't be called functional neither (e.g. lives in an ocean etc.).

Multiple-functional theory recognises that most concepts consist of several properties. It suggests the division of functional features into entity behaviour (what an object does) and functional information (what we can do with an object). Perceptual information is also divided into visual, auditory, tactile and taste. Cree and McRae (2003) identified seven different patterns of category-specific deficits following brain damage - instead of only 2, 'living' vs. non-living'. This approach is consistent with brain-imaging findings that different concept properties are stored in different parts of the brain (e.g. Martin & Chao, 2001).

Defining-attribute approach to categorisation

Categorising novel entities allows us to apply relevant knowledge about the category in order to understand its properties. For example, classifying a novel object as a 'kettle' enables us to understand its purpose and functions even if we haven't seen this particular kettle before. In this respect, categorisation helps to minimise cognitive effort when encountering novel entities.

Classical theory of categorisation is defining-attribute approach. The idea is that a category is defined by a set of attributes. Each of them is singly crucial; if an item does not have even one of the attributes which are deemed necessary, it isn't a member of that category no matter what other features it has. If it does have all the necessary attributes, it is a member of a category no matter what other attributes it has/hasn't.

According to this theory, category membership is all-or-none. All the members are equally representative of a category: that is, lion is as much a 'mammal' as a platypus. People have a stored knowledge of these attributes, and apply them to novel entities in order to categorise and understand them.

Criticism

There has been a lot of criticism towards this theory. Firstly, the categories are way too clear-cut; in reality, boundaries are often very 'fuzzy'. McCloskey & Glucksberg (1978) found, that not only categorisation differed between the participants, but also within participants when they were asked to categorise same items a month later. It encouraged the idea that category boundaries are fuzzy while centres (prototypes) are clear.

Stimilarly, Rosch & Mervis (1975) suggested that some concepts were considered to be more typical of a category than others, and that category membership appears to be gradient rather than equal. This idea led to a typicality theory of categorisation which is discussed further.

Thirdly, it may be difficult to establish a set of defining attributes for many concepts - especially abstract ones; for example, everyone can tell whether something is or isn't a game - but what attributes does a game have? Moreover, our beliefs of what attributes are necessary for a particular category can change with experience (Rey, 1983) or simply be wrong (McNamara & Sternberg, 1983). Thus, our knowledge about defining attributes is unstable.

For example, lets take a category 'mammal' and two items: cat and whale. Firstly, we need some knowledge of what defining attributes of a 'mammal' are: lets say, it produces milk to feed its offspring, it needs air to breeze and it is covered with hair. You might know straight away that cat possesses these attributes, but you might not have enough experience with whales to know for sure, thus may categorise them as 'fish'. It does not make a whale less of a mammal, and the boundaries between the categories 'fish' and 'mammal' less clear-cut. However, it shows that knowledge and experience does affect our beliefs about defining attributes. Now, if there are no consequences of incorrect categorisation, the boundaries may remain fuzzy; however, if there are consequences (such as 'E' grade in biology), a clear boundary may emerge very quickly.

Classical theory of categorisation is defining-attribute approach. The idea is that a category is defined by a set of attributes. Each of them is singly crucial; if an item does not have even one of the attributes which are deemed necessary, it isn't a member of that category no matter what other features it has. If it does have all the necessary attributes, it is a member of a category no matter what other attributes it has/hasn't.

According to this theory, category membership is all-or-none. All the members are equally representative of a category: that is, lion is as much a 'mammal' as a platypus. People have a stored knowledge of these attributes, and apply them to novel entities in order to categorise and understand them.

Criticism

There has been a lot of criticism towards this theory. Firstly, the categories are way too clear-cut; in reality, boundaries are often very 'fuzzy'. McCloskey & Glucksberg (1978) found, that not only categorisation differed between the participants, but also within participants when they were asked to categorise same items a month later. It encouraged the idea that category boundaries are fuzzy while centres (prototypes) are clear.

Stimilarly, Rosch & Mervis (1975) suggested that some concepts were considered to be more typical of a category than others, and that category membership appears to be gradient rather than equal. This idea led to a typicality theory of categorisation which is discussed further.

Thirdly, it may be difficult to establish a set of defining attributes for many concepts - especially abstract ones; for example, everyone can tell whether something is or isn't a game - but what attributes does a game have? Moreover, our beliefs of what attributes are necessary for a particular category can change with experience (Rey, 1983) or simply be wrong (McNamara & Sternberg, 1983). Thus, our knowledge about defining attributes is unstable.

For example, lets take a category 'mammal' and two items: cat and whale. Firstly, we need some knowledge of what defining attributes of a 'mammal' are: lets say, it produces milk to feed its offspring, it needs air to breeze and it is covered with hair. You might know straight away that cat possesses these attributes, but you might not have enough experience with whales to know for sure, thus may categorise them as 'fish'. It does not make a whale less of a mammal, and the boundaries between the categories 'fish' and 'mammal' less clear-cut. However, it shows that knowledge and experience does affect our beliefs about defining attributes. Now, if there are no consequences of incorrect categorisation, the boundaries may remain fuzzy; however, if there are consequences (such as 'E' grade in biology), a clear boundary may emerge very quickly.

Prototype theory

Unlike the classical theory, prototype theory of categorisation is based on the idea of graded categories. It suggests that each category has more and less central, or typical, members. For example, robin is a more typical 'bird' than a penguin is.

The term 'prototype' was first defined by Eleanor Rosch (1973). As such, it may not be a specific, real-life item/entity which has been encountered in life. Rather, it is a set of the most characteristic attributes of a category, and differs from one person to another. For example, prototype of a 'dog' may not be a particular breed of a dog but rather a set of characteristics such as four paws, fur and tail. The fact that some dogs do not have a tail (or fur) does not exclude them from the category all together, but makes them less typical - due to the fact that they possess less features of the prototype.

Some evidence of more and less central members of categories came from studies which measured response time: more typical members are categorised quicker than non-typical ones.This phenomenon is referred to as a typicality effect. Studies involving priming showed that when primed with superordinate category (e.g. 'mammal'), participants identified if two words were the same quicker when they were more typical (e.g. robin-robin vs. ostrich-ostrich).

Coming back to the notion of hierarchies, we tend to use basic-level categories more frequently in everyday lives; for example, if asked what we sit at, we answer 'chair' rather than 'stool' or 'furniture'. Category like 'furniture' may have a prototypical member, but no cognitive visual representation. On the other hand, basic categories in 'furniture' ('chair', 'table', 'bed') are full of informational content and can easily be defined in terms of semantic features.

Rosch (1978) suggests that this level has the highest degree of informational validity. According to her, categorisation process occurs on a basic level by comparing features of a novel entity to those of a prototype; the more features they hold in common, the more prototypical the stimulus appears to be.

The term 'prototype' was first defined by Eleanor Rosch (1973). As such, it may not be a specific, real-life item/entity which has been encountered in life. Rather, it is a set of the most characteristic attributes of a category, and differs from one person to another. For example, prototype of a 'dog' may not be a particular breed of a dog but rather a set of characteristics such as four paws, fur and tail. The fact that some dogs do not have a tail (or fur) does not exclude them from the category all together, but makes them less typical - due to the fact that they possess less features of the prototype.

Some evidence of more and less central members of categories came from studies which measured response time: more typical members are categorised quicker than non-typical ones.This phenomenon is referred to as a typicality effect. Studies involving priming showed that when primed with superordinate category (e.g. 'mammal'), participants identified if two words were the same quicker when they were more typical (e.g. robin-robin vs. ostrich-ostrich).

Coming back to the notion of hierarchies, we tend to use basic-level categories more frequently in everyday lives; for example, if asked what we sit at, we answer 'chair' rather than 'stool' or 'furniture'. Category like 'furniture' may have a prototypical member, but no cognitive visual representation. On the other hand, basic categories in 'furniture' ('chair', 'table', 'bed') are full of informational content and can easily be defined in terms of semantic features.

Rosch (1978) suggests that this level has the highest degree of informational validity. According to her, categorisation process occurs on a basic level by comparing features of a novel entity to those of a prototype; the more features they hold in common, the more prototypical the stimulus appears to be.

Exemplar theory

Hampton (1980) noticed that not all concepts have prototypic characteristics; particularly, the abstract ones (e.g. joy). Exemplar theory seems to work better with abstract concepts. It is based on an idea that there are specific remembered instances of category members that we hold in memory. For example, your exemplar of a 'dog' may be your bloodhound Lilly. Talking of abstract concepts, exemplar of joy could be a memory of you getting a puppy last Christmas. According to this theory, we categorise by comparing new entities with specific exemplars rather than with a prototypical set of characteristics. Thus, it also accounts for the typicality effect during the image recognition tasks.

However, although both theories involve comparing a stimulus to a referenced ideal (prototype or exemplar), exemplars tend to be affected by context of a given situation, and items with high typicality scores in one context may have low typicality in another. Typicality also clearly depends on a person's experience; if your chihuahua is the most frequently encountered exemplar of a 'dog', then little dogs will seem more typical to you than to those owning large dogs.

However, although both theories involve comparing a stimulus to a referenced ideal (prototype or exemplar), exemplars tend to be affected by context of a given situation, and items with high typicality scores in one context may have low typicality in another. Typicality also clearly depends on a person's experience; if your chihuahua is the most frequently encountered exemplar of a 'dog', then little dogs will seem more typical to you than to those owning large dogs.

RSS Feed

RSS Feed